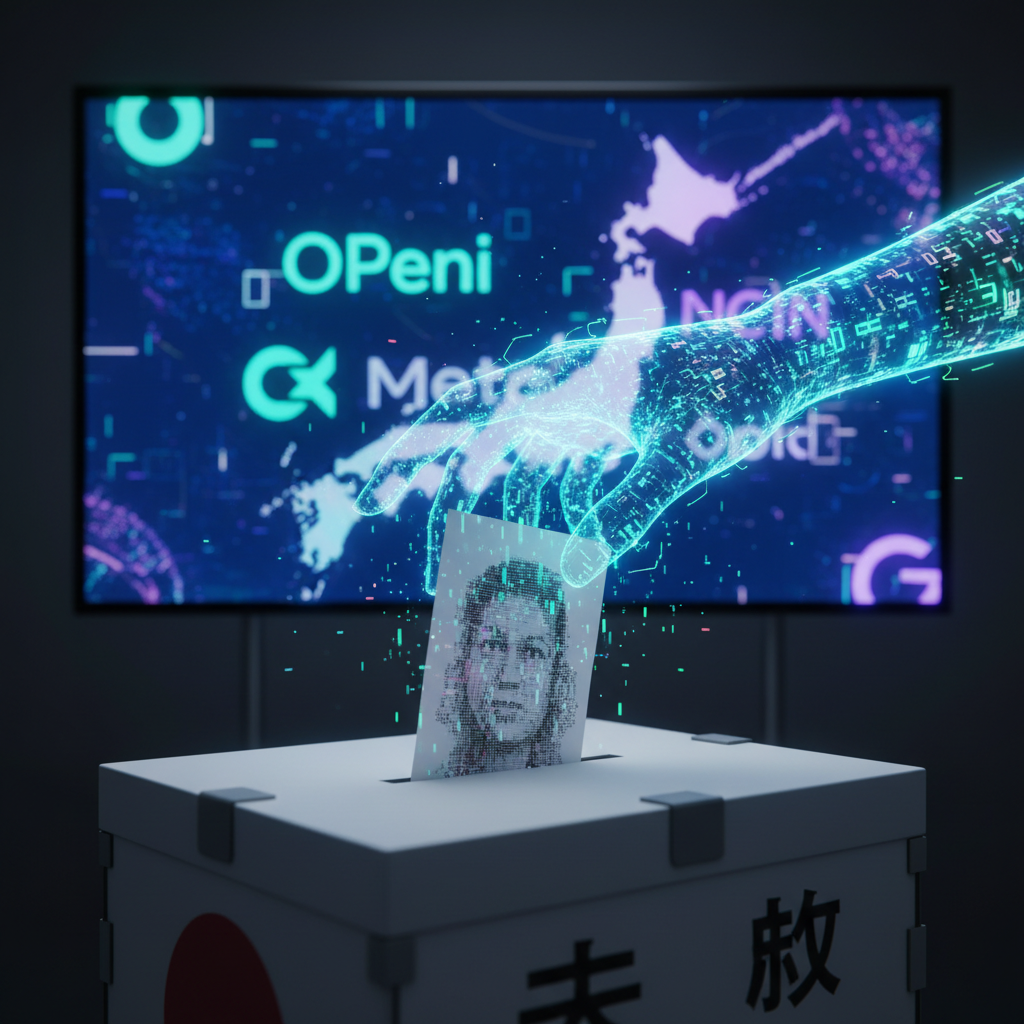

The delicate machinery of democracy, built upon the bedrock of trust and verifiable information, faces an unprecedented challenge. It is not from foreign armies or economic blockades, but from an enemy far more subtle and pervasive: the AI-generated deepfake. As a journalist from Japan, a nation where precision matters and societal harmony is highly valued, I observe this phenomenon with a particular apprehension. The engineering is remarkable, yet its potential for misuse is profound, especially as we approach critical election periods globally, including in our own prefectures.

At its core, a deepfake is a synthetic media file, typically video or audio, that has been manipulated or generated by artificial intelligence to depict someone saying or doing something they never did. Imagine a politician, known for their measured public persona, suddenly appearing in a viral video delivering a divisive speech they never uttered. Or an opposition leader caught on audio making a compromising statement that is entirely fabricated. These are not mere Photoshopped images; they are sophisticated, often hyper-realistic, digital constructs designed to deceive.

The Big Picture: A Digital Puppet Master

What does this system do? It creates a digital illusion, a convincing simulacrum of reality that can be deployed at scale. In the context of elections, the goal is to manipulate public perception, sow discord, or discredit political figures. Unlike traditional propaganda, which often relies on textual misinformation or crudely altered images, deepfakes leverage the power of visual and auditory authenticity. They bypass our cognitive defenses that are trained to trust what we see and hear. The widespread availability of powerful generative AI models, from OpenAI's Dall-e and Sora to Meta's Llama-powered image generators, means that the tools for creating such sophisticated forgeries are no longer confined to state-sponsored actors or highly skilled specialists. They are becoming democratized, accessible to anyone with a computer and a malicious intent.

The Building Blocks: Neural Networks and Data

To understand how deepfakes work, we must first appreciate their fundamental components. The primary technology enabling deepfakes is deep learning, a subset of machine learning that utilizes artificial neural networks. Think of these networks as digital brains, composed of interconnected layers of nodes, much like neurons. These networks are trained on vast datasets.

-

Generative Adversarial Networks (GANs): This is the most common architecture for deepfake creation. A GAN consists of two competing neural networks: a 'generator' and a 'discriminator'. The generator's task is to create synthetic data, such as a fake image or video frame, from random noise. The discriminator's job is to distinguish between real data and the fake data produced by the generator. They play a continuous game of cat and mouse; the generator tries to fool the discriminator, and the discriminator tries to get better at detecting fakes. This adversarial training process drives both networks to improve, resulting in increasingly realistic outputs.

-

Variational Autoencoders (VAEs): While GANs are excellent for generating novel content, VAEs are often used for tasks like face swapping. A VAE learns to encode an input image into a lower-dimensional representation, then decode it back into an image. For deepfakes, two VAEs might be trained: one to encode a source face and decode it onto a target face, and another to do the reverse. By swapping the encoded representations, the system can transfer facial expressions and identity.

-

Large Language Models (LLMs) and Diffusion Models: Newer deepfake techniques leverage the power of LLMs, like those developed by Anthropic or Google, to generate scripts or audio, which are then combined with diffusion models (like those powering OpenAI's Sora) to create highly realistic video. Diffusion models work by gradually adding noise to an image and then learning to reverse that process, effectively generating an image from noise. This allows for unprecedented control over content generation, from specific facial expressions to entire scenes.

Step by Step: From Input to Output

Let us walk through a simplified example of creating a deepfake video of a public figure, say, a candidate for the Diet, delivering a speech they never made.

-

Data Collection: The first step involves gathering a substantial amount of data of the target individual. This includes numerous images and video clips of their face from various angles, with different expressions, and under diverse lighting conditions. For audio deepfakes, hours of their spoken voice are required. This data trains the AI to understand the target's unique facial structure, mannerisms, and vocal patterns.

-

Training the AI: A GAN or VAE based system is then trained. For a face swap, the system learns to map the target's face onto a source video. For generating a completely new video, the AI learns to synthesize the target's face and body movements based on a script or a reference video.

-

Content Generation: An operator provides the desired content, perhaps a script for a speech or a reference video of another person performing the actions. The AI then synthesizes the target's likeness, voice, and movements to match this new content. This involves intricate processes of facial re-enactment, lip synchronization, and voice cloning.

-

Post-Processing: The initial AI output may contain artifacts or inconsistencies. Human editors or additional AI tools perform post-processing to refine the deepfake, ensuring seamless integration, natural lighting, and realistic textures. This is where the output becomes virtually indistinguishable from genuine media.

A Worked Example: The Fictional 'Sakura Scandal'

Consider a hypothetical scenario during a Japanese general election. A deepfake video emerges online, purportedly showing a leading candidate, Mr. Tanaka, accepting a bribe from a shadowy figure in a dimly lit izakaya. The video is expertly crafted: Mr. Tanaka's face, his characteristic slight bow, his voice expressing gratitude for the illicit funds, all appear authentic. The video spreads like wildfire across social media platforms, amplified by algorithms. Despite immediate denials from Mr. Tanaka's campaign, the damage is done. Public trust erodes, voters question his integrity, and his poll numbers plummet. The election outcome could be irrevocably altered, all due to a meticulously constructed digital lie.

Why It Sometimes Fails: Limitations and Edge Cases

Despite their sophistication, deepfakes are not infallible. There are several tell-tale signs that can indicate a manipulation, though these are becoming increasingly subtle:

- Inconsistent Lighting or Shadows: The generated face might not perfectly match the lighting conditions of the background video.

- Unnatural Blinking Patterns: Early deepfakes often showed subjects blinking infrequently or unnaturally. While improved, subtle anomalies can still occur.

- Lack of Micro-Expressions: The nuanced, involuntary facial movements that convey genuine emotion are difficult for AI to replicate perfectly.

- Audio-Visual Desynchronization: While lip-syncing has improved, slight mismatches between spoken words and lip movements can sometimes be detected.

- Pixelation or Artifacts: Compression artifacts or slight blurring around the edges of the manipulated area can be indicators.

- Absence of Body Language: Often, only the face is manipulated, leading to a disconnect between the facial expressions and the body language, which might appear stiff or unnatural.

However, detection is a constant arms race. As detection methods improve, so do deepfake generation techniques. Companies like Adobe are developing content authenticity initiatives, embedding digital watermarks or cryptographic signatures into media at the point of capture, but widespread adoption remains a challenge.

Where This Is Heading: The Future of Digital Deception and Defense

The trajectory of deepfake technology is clear: it will become more realistic, easier to create, and harder to detect. The implications for democracy are profound. We are entering an era where seeing may no longer be believing, and the very concept of objective truth can be weaponized. "The engineering is remarkable, but the ethical quandaries it presents are immense," noted Dr. Hiroshi Ishiguro, director of the Intelligent Robotics Laboratory at Osaka University, known for his work on highly realistic androids. "We must develop robust countermeasures, both technological and societal, with the same precision we apply to our innovations."

Japan has been quietly building expertise in AI and cybersecurity, recognizing the dual nature of these powerful technologies. Institutions like the National Institute of Advanced Industrial Science and Technology (aist) are researching advanced detection methods, while government bodies are exploring legislative frameworks to address the spread of synthetic media. Yet, technological solutions alone are insufficient. Public education is paramount, fostering a critical media literacy that empowers citizens to question and verify information, especially during election cycles. The responsibility also falls on social media platforms, like X and Facebook, to implement stricter content moderation policies and invest in AI-driven detection systems. As Reuters has reported, the pressure on these platforms to act responsibly is mounting globally.

The challenge of deepfakes in elections is not merely a technical one; it is a fundamental test of our societal resilience and our commitment to democratic principles. The future of fair and informed electoral processes hinges on our collective ability to navigate this new landscape of digital deception. We must act with foresight and determination, ensuring that the precision of AI is harnessed for progress, not for undermining the very foundations of our governance. For more on the broader implications of AI in society, one might consider the ethical debates surrounding its deployment in other sectors, such as those discussed in articles concerning AI ethics. The stakes, for Japan and for democracies worldwide, could not be higher.