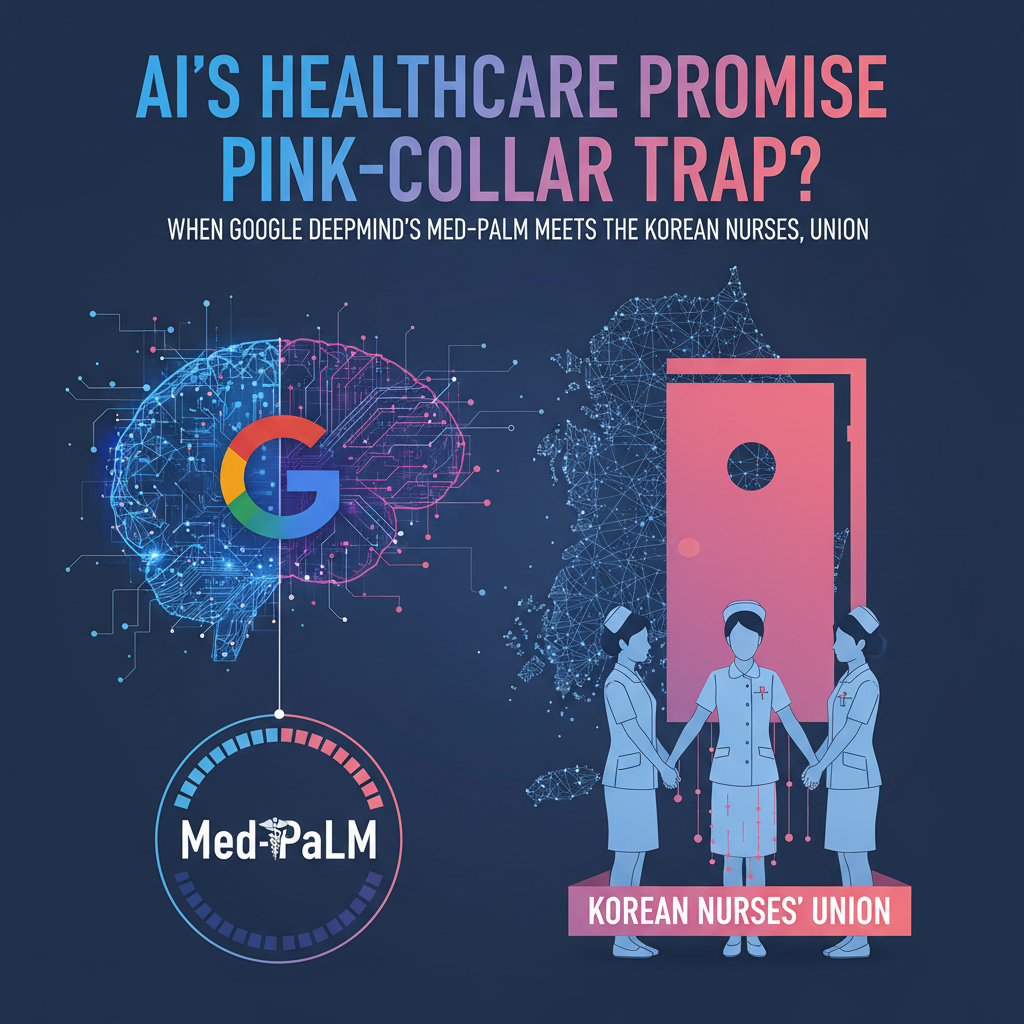

Is the future of healthcare a sterile, algorithm-driven utopia, or a battleground where human compassion clashes with cold, hard code? The tech titans, from Google DeepMind with its much-hyped Med-PaLM to Microsoft's Nuance, want us to believe in the former. They paint a picture of AI as the benevolent assistant, freeing doctors and nurses from drudgery, optimizing diagnoses, and streamlining operations. But peel back the glossy layers of their marketing, and you find a different story brewing, one where labor unions and worker movements are not just pushing back, but actively drawing lines in the sand. This isn't a fad; this is the new normal, and it's shaking the very foundations of AI's perceived inevitability.

Historically, technological advancements have always brought societal shifts, often accompanied by worker unrest. Think of the Luddites smashing textile machinery in 19th-century England, or the widespread strikes during the industrial revolution. Each wave of innovation promised progress, yet often delivered displacement and exploitation before equilibrium was found. In South Korea, our own economic miracle, fueled by relentless industrialization, saw its share of intense labor struggles, from the Guro Industrial Complex strikes to the ongoing battles for fair wages and working conditions in our chaebol-dominated landscape. We understand that progress, left unchecked, rarely benefits everyone equally. So, when Silicon Valley preaches the gospel of AI efficiency, Seoul has a different answer: show us the human cost.

Today, the pushback against AI in healthcare is not just theoretical; it's tangible and growing. Data from the International Council of Nurses indicates that over 60% of nurses globally feel their jobs are either directly threatened or significantly altered by AI automation, particularly in administrative tasks, patient monitoring, and even preliminary diagnostics. Here in South Korea, the Korean Nurses' Association, a powerful voice for our healthcare professionals, has been increasingly vocal. Last year, they reported a 15% increase in complaints from members regarding AI-driven workload changes and a perceived devaluing of human expertise. "We are not against technology that truly assists us," stated Dr. Park Eun-ji, President of the Korean Nurses' Association, in a recent interview. "But when AI tools like automated charting systems lead to increased patient loads without adequate staffing, or when diagnostic AI bypasses the critical human judgment of a physician, it becomes a threat, not a help. Our patients deserve human care, not just algorithms." Her words echo a sentiment that is reverberating across the globe, from the American Medical Association's cautious stance on AI to European unions demanding stronger regulatory frameworks.

Consider the case of a major university hospital in Seoul, which, in 2025, piloted an AI-powered patient triage system developed by a local startup, promising to reduce wait times by 30%. Sounds great, right? The reality was a surge in misdirected patients due to the AI's inability to grasp nuanced symptoms, and an overwhelming increase in stress for nurses who had to correct the system's errors while simultaneously managing frustrated patients. The pilot was quietly scaled back after only six months. This isn't just about job security; it's about the very nature of care. Healthcare is intrinsically human, built on trust, empathy, and the intangible wisdom gained from years of direct patient interaction. Can an algorithm truly replicate the comfort a nurse provides, or the intuitive diagnosis a seasoned doctor makes based on a patient's subtle non-verbal cues?

Experts are divided, of course. Dr. Lee Jae-won, a leading AI ethicist at Kaist, argues for a balanced approach. "The fear of AI is understandable, but we must not throw the baby out with the bathwater," he told DataGlobal Hub. "AI, when designed ethically and implemented thoughtfully, can augment human capabilities, not replace them. We need robust frameworks for human oversight, transparency in algorithmic decision-making, and retraining programs for workers. The problem isn't AI itself; it's how companies like OpenAI and Google are pushing it without considering the social contract." On the other hand, Professor Kim Min-joon, an economist at Seoul National University, paints a bleaker picture. "The history of capitalism shows that efficiency almost always trumps human cost in the short term," he observed. "The current wave of AI automation, especially in sectors like healthcare that are ripe for 'optimization,' is designed to cut costs, and labor is often the largest cost. Unless unions exert significant pressure, we will see a race to the bottom, where human workers are increasingly marginalized." His perspective is grim, but grounded in historical precedent.

Indeed, the K-wave is coming for AI too, but perhaps not in the way tech evangelists imagine. While South Korean companies like Samsung and LG are investing heavily in AI for smart hospitals and personalized medicine, there's a growing awareness of the social implications. The government, often seen as pro-business, is also starting to feel the heat from powerful labor groups. The Ministry of Health and Welfare recently announced a task force to study the impact of AI on healthcare employment, a clear signal that the issue is moving beyond academic debate into policy-making. This is not just a domestic concern; it's a global phenomenon. Look at the WGA and Sag-aftra strikes in Hollywood, where AI was a central point of contention. Or the ongoing discussions in the European Union about the AI Act, which seeks to regulate high-risk AI systems, including those in healthcare. The collective voice of workers is becoming too loud to ignore.

My verdict? This isn't a temporary blip; it's a fundamental shift. The era of unchecked AI deployment, particularly in sensitive sectors like healthcare, is over. Labor unions and worker movements are not just reacting; they are proactively shaping the future of work, demanding a seat at the table where AI policies are made. They understand that if AI is allowed to run rampant, the human element, the very core of healthcare, will be eroded. The challenge for tech giants like Google and Microsoft isn't just to build smarter algorithms, but to build trust, to demonstrate that their innovations truly serve humanity, not just their bottom line. If they fail, they will find themselves facing not just regulatory hurdles, but a global wave of organized resistance that could slow their progress to a crawl. The future of healthcare AI won't be written by engineers alone; it will be co-authored, often contentiously, by the very people whose jobs and lives it seeks to transform. This is a battle for more than just jobs; it's a battle for the soul of healthcare itself. For more insights into how AI is reshaping industries, you can explore articles on TechCrunch or read deeper analysis on MIT Technology Review. The conversation is just beginning. What will you do when AI comes for your job? What will you demand of the companies building these systems? These are the questions we must all confront, here in Seoul and everywhere else. And for a local perspective on how South Korea is navigating these complex waters, consider the discussions around our own Samsung's AI Safety Institute [blocked], which is grappling with similar ethical dilemmas. It's time to stop pretending AI is an unstoppable force and start demanding that it be a responsible one. The workers are speaking; are you listening?