The notion of artificial intelligence often conjures images of super-intelligent robots or self-aware digital entities, particularly when we speak of space exploration. Hollywood has certainly played its part in shaping these grand, often fantastical, expectations. However, for those of us who have witnessed enough technological cycles, the reality on the ground, or rather, in orbit and on distant planetary surfaces, is far more pragmatic. The concept of 'Cognitive Autonomy Beyond Earth' is not about creating a Martian HAL 9000; it is about engineering machines that can think for themselves in environments where human intervention is either impossible or too slow to be effective. Let's be realistic about what this truly means.

What is Cognitive Autonomy Beyond Earth?

At its core, Cognitive Autonomy Beyond Earth refers to the capability of spacecraft, rovers, or probes to perceive their environment, understand their mission goals, plan actions, execute those actions, and adapt to unexpected situations without constant human oversight. Imagine a Malian farmer navigating a complex, unfamiliar field. Instead of waiting for instructions for every single furrow, he uses his knowledge of the land, the weather, and his crops to make decisions on the fly. This is the essence of autonomy, scaled to the extreme conditions of space. It moves beyond simple automation, where a machine follows pre-programmed steps, to a state where the machine can learn, reason, and make decisions based on dynamic data.

Why Should You Care?

While the immediate applications might seem distant from daily life in Bamako or Timbuktu, the principles behind cognitive autonomy are profoundly relevant. Consider the challenges we face with infrastructure, resource management, or even disaster response. The ability for systems to operate independently, making intelligent decisions in remote or hazardous areas, has direct parallels. For instance, imagine autonomous drones inspecting vast stretches of agricultural land for disease, or managing water distribution in arid regions without constant human piloting. The data tells a different story than the headlines often suggest; the innovations driven by space exploration frequently trickle down to Earth, offering practical solutions, not moonshots, for terrestrial problems. Furthermore, the development of robust, self-reliant AI systems for space pushes the boundaries of computing and energy efficiency, areas where improvements can significantly benefit emerging economies.

How Did It Develop?

The journey towards cognitive autonomy in space has been incremental, building on decades of robotics and AI research. Early space missions relied heavily on ground control, with every command uplinked and every telemetry datum downlinked. As missions grew more complex and distances increased, the time delay for communication became a major bottleneck. For example, a command sent to Mars can take anywhere from 4 to 24 minutes to arrive, making real-time control impossible. This necessitated a shift. The 1997 Mars Pathfinder mission's Sojourner rover, while largely teleoperated, had rudimentary hazard avoidance capabilities. This was a crucial first step. Later, missions like NASA's Mars Exploration Rovers, Spirit and Opportunity, introduced more sophisticated autonomous navigation and scientific targeting capabilities. The advancements in machine learning, particularly deep learning and reinforcement learning, over the last decade have accelerated this trajectory, allowing for more nuanced decision-making capabilities onboard.

How Does It Work in Simple Terms?

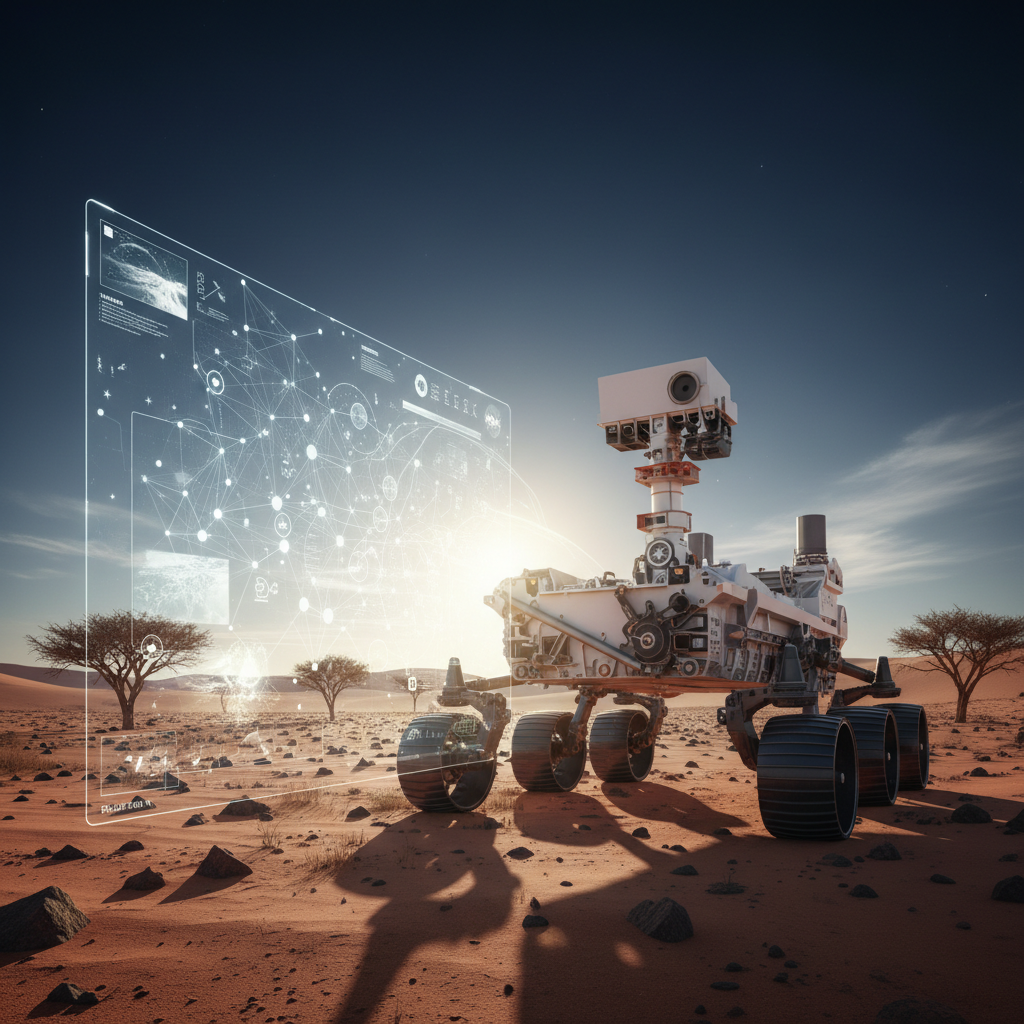

Think of a child learning to walk. They perceive their surroundings, identify obstacles, plan their next step, execute it, and adjust if they stumble. Cognitive autonomy in space functions similarly, albeit with highly specialized sensors and algorithms. A rover, for example, uses cameras and other sensors to build a 3D map of its environment. This perception data is then fed into AI algorithms, often neural networks, that interpret the scene, identifying rocks, craters, and safe paths. A planning module then generates a sequence of actions to reach a goal, such as examining a specific rock formation. Crucially, the system has a 'reasoning engine' that can evaluate the plan against mission objectives and constraints, and adapt if conditions change. If a path is unexpectedly blocked, the system does not wait for human input; it re-plans. This continuous loop of perceive, plan, act, and adapt is what defines cognitive autonomy.

Real-World Examples

- NASA's Perseverance Rover: This is perhaps the most prominent example. The Perseverance rover, currently exploring Jezero Crater on Mars, employs advanced autonomous navigation capabilities. Its 'AutoNav' system allows it to drive much faster than previous rovers, covering hundreds of meters per Martian day by making its own decisions about routes and avoiding hazards. It uses multiple cameras and sophisticated software to create detailed terrain maps and identify safe paths, drastically reducing the need for human intervention in its daily traverses. This efficiency is paramount for achieving its ambitious scientific goals, including collecting samples for potential return to Earth. According to Dr. Vandi Verma, Chief Engineer for Robotic Operations at NASA's Jet Propulsion Laboratory,