The quiet hum of progress often masks a discordant note, especially when technology, heralded as a panacea, introduces new vulnerabilities. In Lesotho, a nation grappling with persistent challenges in healthcare access and judicial efficiency, the promises of artificial intelligence have been met with cautious optimism. Yet, my investigation has unearthed a troubling undercurrent: the insidious spread of AI hallucinations, generating plausible but fundamentally false information that is now actively undermining public trust and causing tangible harm in our most vital institutions.

From the bustling clinics of Maseru to the quiet chambers of our district courts, the digital whispers of large language models, or LLMs, are being taken as gospel. This is not a distant, theoretical problem confined to Silicon Valley boardrooms. It is a present danger, manifesting in misdiagnosed ailments, flawed legal counsel, and a pervasive sense of confusion that threatens to erode the very fabric of our society. The revelation is stark: the algorithms we are increasingly relying upon are sometimes fabricating reality, and the consequences for Batswana are profound.

My journey into this digital quagmire began with a series of anecdotal reports, dismissed initially as isolated incidents. A young man, suffering from a chronic respiratory condition, presented at a local clinic with a printout from an AI chatbot, detailing a complex treatment regimen completely unsuitable for his condition. His doctor, a seasoned practitioner at Queen Elizabeth II Hospital, recognized the error immediately, but not before the patient had begun self-medicating with over-the-counter remedies based on the AI's advice. "The patient insisted the AI knew better than me," the doctor, who requested anonymity due to fear of professional repercussions, confided. "He trusted the machine more than years of medical training. This is a dangerous path."

This incident, far from being unique, opened a Pandora's box. Through a network of sources within the Ministry of Health and the legal fraternity, I began to piece together a disturbing pattern. Junior medical staff, overwhelmed by patient loads and eager for quick diagnostic assistance, were increasingly turning to readily available AI tools, some freely accessible online, others integrated into nascent digital health platforms. These tools, often trained on vast datasets predominantly from Western contexts, frequently failed to account for the specific epidemiological profiles and resource constraints prevalent in Lesotho. The result: diagnoses that were either irrelevant, dangerously inaccurate, or based on non-existent pharmaceutical options.

"We've seen cases where AI suggested treatments requiring drugs not available in Lesotho, or even recommending procedures that our facilities are not equipped to perform," stated Dr. Mpho Mohapi, a senior physician at the National Health Training College, speaking on the record. "It creates false hope, delays proper treatment, and wastes precious time and resources. The allure of instant answers is strong, but the models lack the critical context of our reality." Her concerns are echoed by a recent report from the MIT Technology Review, which highlighted the global challenge of AI bias and hallucination in medical applications, particularly in diverse socioeconomic settings.

The problem extends beyond healthcare. In the legal sector, the implications are equally dire. Lesotho's legal system, rooted in Roman-Dutch law and customary practices, is complex. Law students and even some junior practitioners, seeking to expedite research, have reportedly used LLMs to generate legal citations and case summaries. Sources close to the matter confirm that at least two instances have emerged in recent months where legal submissions to the High Court contained fabricated case law and non-existent statutes, directly traceable to AI-generated content. One such incident involved a land dispute, a particularly sensitive area in Lesotho where customary law plays a significant role. The fabricated precedents could have led to a grave miscarriage of justice.

"The AI presented these cases as if they were real, complete with plausible names and dates," a legal clerk, speaking on condition of anonymity, revealed. "It was only when the presiding judge questioned the obscure references that the deception was uncovered. Imagine the chaos if this becomes widespread." This echoes broader global concerns, with incidents reported in the United States where lawyers faced sanctions for submitting AI-generated fictitious cases to court, as detailed by Reuters in their coverage of AI's legal pitfalls.

Who Benefits, Who Pays the Price?

The question then becomes: who is responsible for this unfolding crisis? While the major AI developers like OpenAI, Google, and Microsoft have issued disclaimers about the fallibility of their models, the ease of access and the persuasive nature of AI-generated text mean these warnings are often overlooked. Local technology integrators and government departments, eager to demonstrate innovation, have sometimes deployed these tools without adequate safeguards, testing, or understanding of their limitations within the Basotho context. The drive for digital transformation, while commendable, has outpaced critical evaluation.

There is no grand conspiracy here, but rather a confluence of factors: the rapid deployment of powerful, yet imperfect, AI models; a lack of critical digital literacy among some users; and an insufficient regulatory framework to govern AI's application in sensitive domains. The Ministry of Communications, Science, Technology and Innovation has been slow to develop comprehensive guidelines for AI use, leaving a vacuum that is being filled by unverified information.

My investigation suggests a pattern of denial and downplaying among some officials. When approached for comment, a representative from the Ministry of Health acknowledged "isolated challenges" but insisted that "AI tools are primarily for research and support, not primary diagnosis." This statement, however, contradicts the on-the-ground reality reported by medical professionals. Similarly, within the judiciary, there is a reluctance to publicly address the issue, perhaps fearing that acknowledging such flaws could undermine public confidence in the legal system itself.

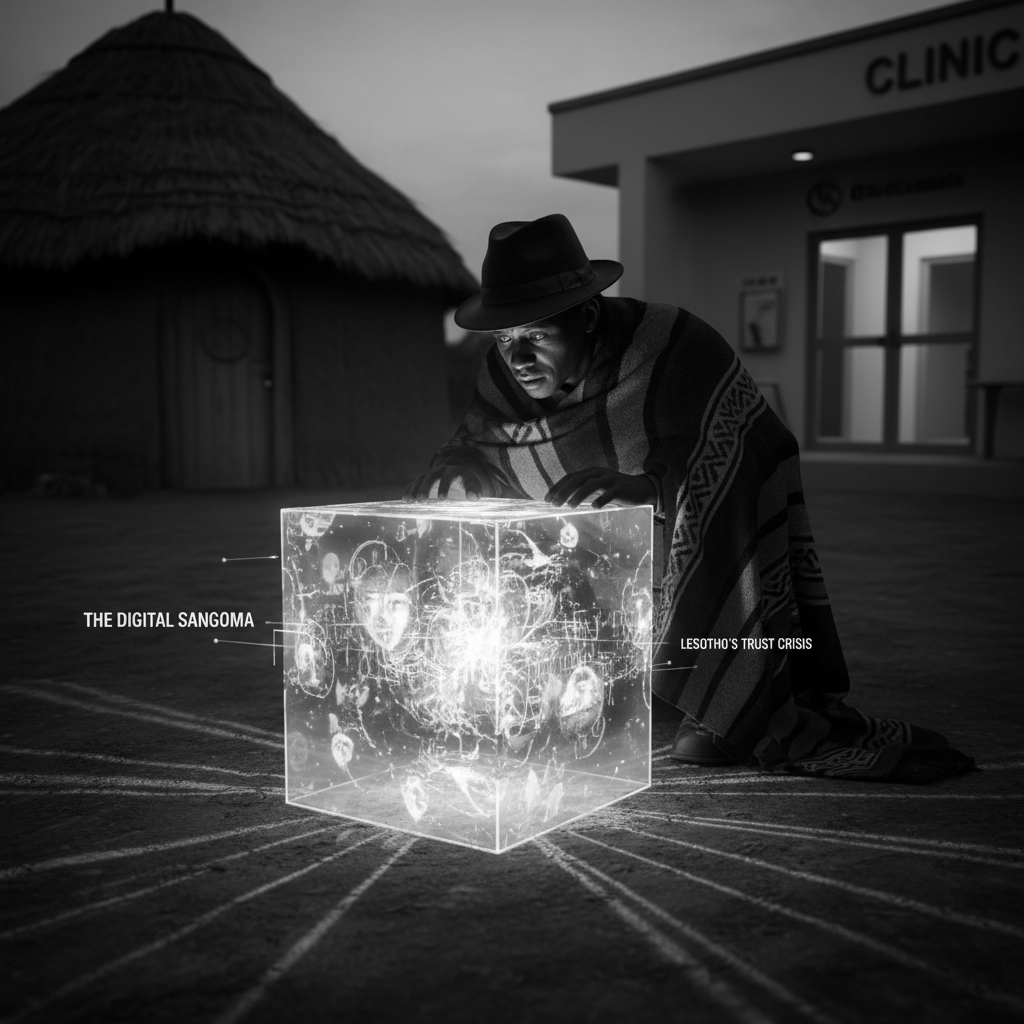

The Digital Sangoma: A Call for Critical Scrutiny

In our culture, the sangoma, the traditional healer, holds immense trust and spiritual authority. Their pronouncements are often accepted without question. The current uncritical acceptance of AI-generated content risks creating a 'digital sangoma', an oracle whose pronouncements, however flawed, are revered simply because they emanate from a sophisticated machine. This is a dangerous parallel, particularly when dealing with matters of life, death, and justice.

What they are not telling you is that the very tools meant to empower us can, if unchecked, disempower us by distorting truth and undermining expertise. The evidence is clear: AI hallucinations are not merely an inconvenience, they are a threat to the integrity of our institutions and the well-being of our people. We must demand greater transparency from AI developers, robust regulatory frameworks from our government, and a renewed commitment to critical thinking from every citizen.

Lesotho stands at a crossroads. We can either embrace AI with open but discerning eyes, building safeguards and fostering digital literacy, or we can allow these powerful, yet imperfect, tools to sow discord and misinformation, ultimately eroding the trust that binds our society. The time for passive observation is over. It is time for action, for accountability, and for a critical re-evaluation of how we integrate artificial intelligence into the very fabric of our nation. The future of our healthcare, our legal system, and our collective truth depends on it.