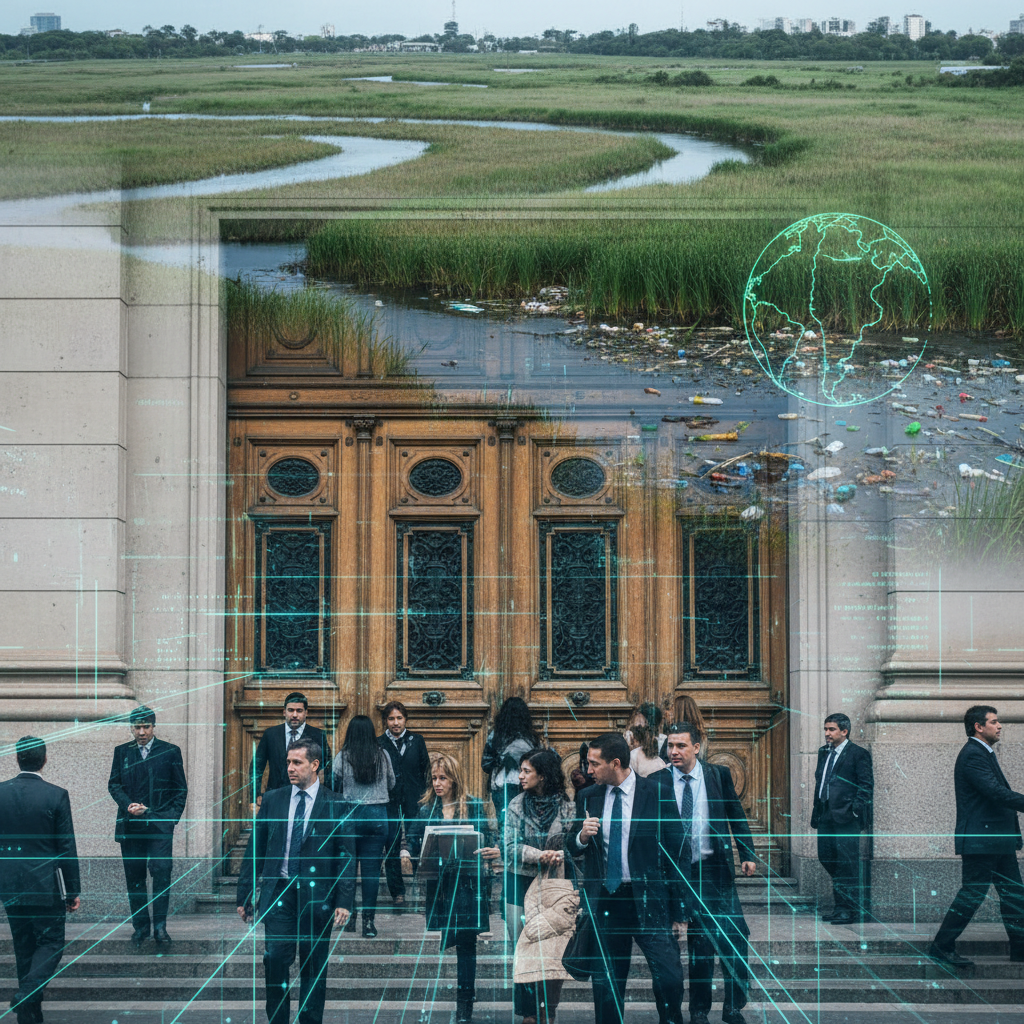

The notion that artificial intelligence can streamline justice, predict crime, and even influence sentencing has captivated technologists and policymakers alike. From Silicon Valley boardrooms to the halls of power in Washington, the allure of algorithmic efficiency is potent. Yet, here in Buenos Aires, where economic volatility and social disparities are not abstract concepts but daily realities, we must ask: but does this actually work? Let's look at the evidence, particularly as companies like Palantir Technologies increasingly eye markets beyond the Global North.

The global discourse around AI in criminal justice often centers on its potential to reduce bias, enhance efficiency, and improve public safety. Proponents argue that algorithms, devoid of human emotion, can analyze vast datasets to identify crime hotspots, predict recidivism, and even suggest appropriate sentences with an objectivity human judges cannot always maintain. The reality, however, is far more complex, particularly when these systems are deployed in contexts marked by historical inequalities and systemic vulnerabilities, such as those found across Latin America.

Consider the concept of predictive policing, a cornerstone of many AI justice initiatives. Companies like Palantir, known for their data integration platforms, offer tools that aggregate information from diverse sources: police records, social media, public databases, and even sensor data. These systems then purport to identify patterns and forecast where and when crimes are most likely to occur. In theory, this allows law enforcement to deploy resources more effectively. In practice, as we have seen in numerous pilot programs globally, these algorithms often amplify existing biases. If historical policing data disproportionately reflects arrests in marginalized communities, the AI will learn to predict more crime in those same areas, creating a self-fulfilling prophecy of over-policing and exacerbating social tensions.

“The data fed into these algorithms is not neutral, it is a reflection of past human decisions, prejudices, and resource allocations,” explains Dr. Sofía Pereyra, a leading criminologist at the University of Buenos Aires. “To assume that an algorithm can magically cleanse this historical baggage is not just naive, it is dangerous. It risks automating and scaling injustice.” Dr. Pereyra points to studies from the United States where predictive policing models have been shown to disproportionately target minority neighborhoods, leading to higher arrest rates for minor offenses and a perpetuation of systemic discrimination. For a nation like Argentina, with its own complex history of social stratification and state power, this potential for algorithmic bias is not merely an academic concern, it is a profound ethical challenge.

The economic imperative for adopting such technologies is often presented as undeniable. In a country grappling with persistent inflation and budgetary constraints, the promise of more efficient law enforcement and judicial processes can be very attractive. Proponents might argue that AI can help optimize scarce resources, reducing the need for extensive human intervention in certain areas. However, the cost of implementing and maintaining these sophisticated systems, often developed by foreign firms, can be exorbitant. Moreover, the lack of transparency inherent in many proprietary algorithms means that local authorities may not fully understand how these systems arrive at their conclusions, making oversight and accountability incredibly difficult.

Sentencing algorithms present an even more fraught ethical landscape. These tools analyze an offender's profile, criminal history, and other factors to recommend a sentence or assess the likelihood of re-offense. While the stated goal is to ensure consistency and fairness, the underlying data can embed and perpetuate societal biases. For instance, if socioeconomic factors correlate with higher recidivism rates in the training data, an algorithm might implicitly penalize individuals from impoverished backgrounds, even if those factors are not explicitly coded as discriminatory. This raises fundamental questions about due process and the very nature of justice. Can we truly delegate the moral weight of sentencing to a mathematical model?

“The Argentine perspective is more nuanced than a simple embrace of technological solutions,” states Ricardo Gómez, a former judge and now a legal tech consultant based in Córdoba. “We have a deep-seated appreciation for human judgment, for the contextual understanding that a judge brings to a case, something an algorithm cannot replicate. The idea of a machine determining a person's liberty, without full transparency or human override, is deeply unsettling to our legal tradition.” Gómez’s concerns echo those of many legal scholars who fear that algorithmic sentencing could erode the principles of individualized justice and rehabilitation.

Indeed, the lack of transparency surrounding many commercial AI systems is a significant hurdle. Companies like Palantir, while providing powerful data analysis tools, often guard their algorithms as proprietary trade secrets. This makes independent auditing for bias, accuracy, and fairness exceedingly difficult. How can a defense attorney challenge a sentence influenced by an algorithm if they cannot examine its inner workings? How can civil society organizations advocate for reform if the mechanisms of decision-making are opaque?

Reform in this area must be proactive and deeply rooted in local context. Simply importing solutions designed for different legal and social landscapes is a recipe for disaster. Any deployment of AI in criminal justice in Argentina must be accompanied by robust regulatory frameworks, independent ethical oversight, and a commitment to transparency. We need clear guidelines on data collection, storage, and usage, ensuring compliance with privacy laws and human rights standards. Furthermore, there must be mechanisms for human review and override at every stage of the algorithmic decision-making process.

Open source alternatives, or at least auditable algorithms, could offer a path forward, allowing local experts to scrutinize and adapt these tools to Argentine realities. Initiatives like the Observatorio de Inteligencia Artificial y Justicia (Observatory of AI and Justice) in Argentina are crucial in fostering this critical dialogue, bringing together legal experts, technologists, and human rights advocates to shape a responsible approach. According to MIT Technology Review, the global conversation about algorithmic accountability is intensifying, and Argentina must be an active participant, not merely a recipient of foreign technologies.

The challenge is not to reject technology outright, but to domesticate it, to ensure it serves our values and addresses our specific societal needs, rather than imposing external frameworks. Buenos Aires has questions Silicon Valley can't answer about how these technologies will interact with our unique social fabric, our economic pressures, and our deeply held beliefs about justice and human dignity. The path forward requires rigorous data analysis, ethical deliberation, and a steadfast commitment to human rights, ensuring that the pursuit of efficiency does not come at the cost of equity and justice. The stakes are too high to simply accept the promises of algorithmic certainty without demanding verifiable proof and unwavering accountability. For more insights into the broader implications of AI in society, one might consult resources like Wired's AI section. The conversation is ongoing, and our critical engagement is paramount. For a wider perspective on technological advancements and their societal impact, TechCrunch offers continuous updates on industry developments.