The Pacific Ocean, vast and deep, holds stories of immense power, of currents that shape destinies, and of resources that sustain life. In the digital realm, a different kind of current is swirling, one fueled by billions of dollars and the promise of a future shaped by artificial general intelligence, or AGI. At the heart of this maelstrom sits Anthropic, a company that has, in recent years, amassed an astonishing war chest from giants like Amazon and Google, all in the name of building 'safe' AGI.

The Billion-Dollar Tsunami: What's Happening

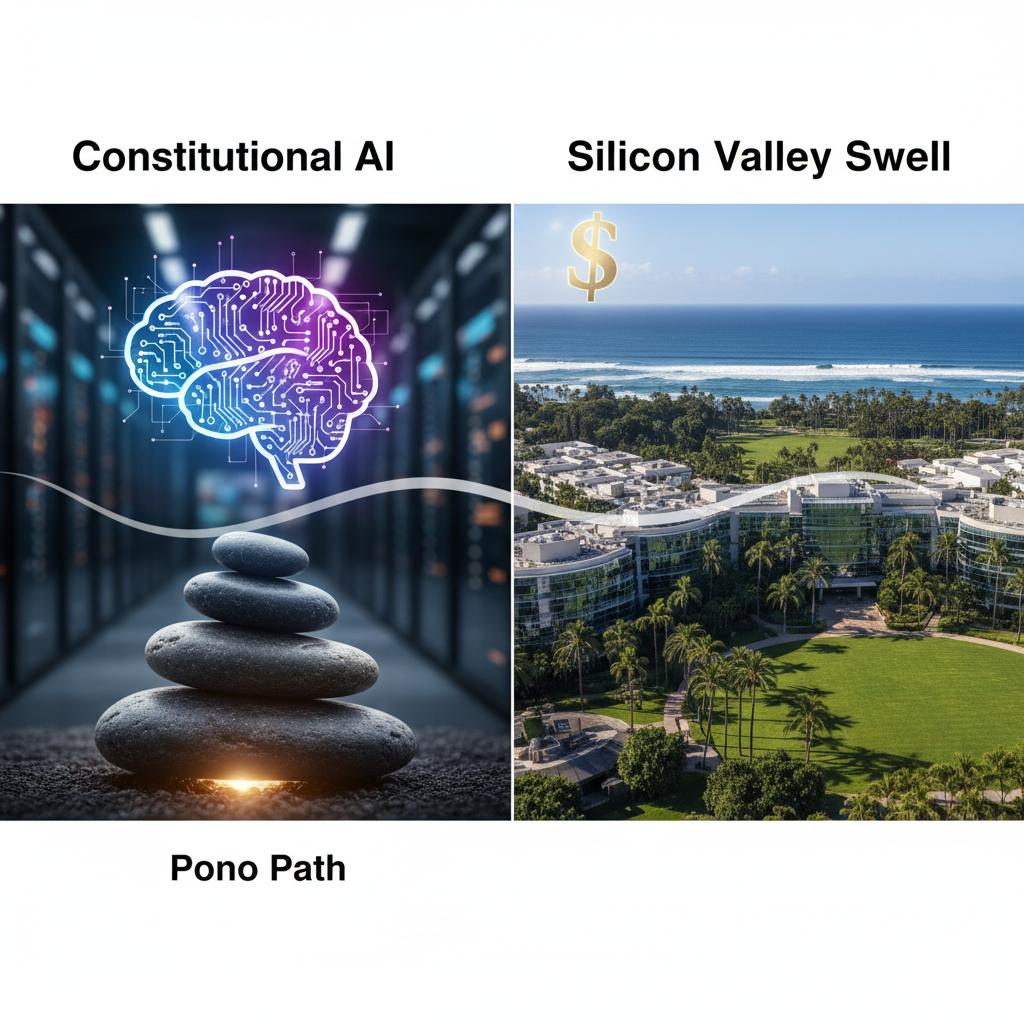

Let's be clear: the scale of investment in Anthropic is not just big, it's unprecedented for a company focused explicitly on AI safety and alignment from its inception. We are talking about multiple rounds, with Amazon reportedly committing up to $4 billion and Google investing another $2 billion, among other significant backers. This isn't venture capital playing with pocket change, it's a strategic move by some of the world's most powerful corporations to secure a foothold, or perhaps even control, over what many believe will be the most transformative technology in human history. Anthropic's approach, dubbed 'Constitutional AI,' aims to imbue its models, like Claude, with a set of guiding principles, a kind of digital moral compass, to ensure they act beneficially and avoid harmful outputs. They are trying to bake ethics into the silicon from day one, rather than patching it on later.

Why Most People Are Ignoring This Digital Tide

For many, especially here in Hawaii and across the wider Pacific, the news of Anthropic's funding rounds feels abstract, distant, like a storm brewing far out at sea. We are focused on the immediate, the tangible: rising sea levels, sustainable tourism, preserving our 'āina, and the daily rhythm of life. The jargon of 'AGI' and 'Constitutional AI' can sound like something out of a science fiction novel, far removed from the practicalities of our island existence. There's a natural human tendency to prioritize what's directly in front of us, and the long-term, existential implications of AI often get lost in the daily grind. Plus, the tech world moves at a dizzying pace, making it hard for anyone outside the immediate bubble to keep up, let alone grasp the profound implications of these developments. The sheer complexity of the technology, coupled with its rapid evolution, creates an attention gap, leaving many unaware of the monumental shifts underway.

How This Digital Swell Affects YOU

Make no mistake, this isn't just about Silicon Valley's latest obsession. The race for AGI, and Anthropic's specific approach to it, will ripple through every aspect of your life, whether you live in Honolulu or a remote atoll. Imagine an AI that can understand and respond to complex legal queries, medical diagnoses, or even help manage our precious natural resources. If Anthropic succeeds in building an AGI that is truly aligned with human values, it could revolutionize education, healthcare, and governance, potentially offering solutions to some of our most intractable problems. For our keiki, the educational landscape could be transformed, offering personalized learning experiences previously unimaginable. For our kūpuna, AI could provide advanced, empathetic care. But if they fail, or if their 'constitution' doesn't reflect the diverse values of humanity, we could see an AI that, while powerful, is culturally blind or even harmful. Your job, your privacy, your access to information, and even the stories we tell ourselves about who we are, could all be profoundly altered. The future is being built on volcanic rock, and these AI models are part of its foundation.

The Bigger Picture: A Global Crossroads

Hawaii sits at the crossroads of Pacific and Silicon Valley, a unique vantage point to observe these global currents. The development of AGI is not merely a technological race, it is a geopolitical one. Nations and corporations are vying for leadership, understanding that whoever controls AGI could wield immense power. Anthropic's significant funding from US-based tech giants underscores a Western-centric approach to AI development, one that prioritizes certain ethical frameworks and societal norms. This raises critical questions for us in the Pacific: Will the 'constitution' of these AGI systems truly be universal, or will it reflect a narrow, dominant worldview? How do indigenous values, the concept of aloha, and our deep connection to the 'āina, get incorporated into these foundational AI models? If AGI is to serve all humanity, it must be developed with a global consciousness, not just a Silicon Valley one. The potential for AGI to accelerate scientific discovery, from climate modeling to oceanography, is immense, offering tools to protect our fragile ecosystems. However, the risk of exacerbating existing inequalities, or of an AI that misunderstands or devalues non-Western perspectives, is equally profound. This isn't just about what AI can do, but what it should do, and for whom.

What Experts Are Saying

The conversation around Anthropic's mission and the broader AGI race is vibrant and often contentious. Dr. Dario Amodei, Anthropic's CEO, has consistently articulated his company's commitment to safety, stating, "We believe that building safe and steerable AI systems will require significant research and development, and we are committed to making that investment." This sentiment is echoed by many in the AI safety community, who see Anthropic as a crucial player in mitigating potential catastrophic risks. However, others express caution. Professor Stuart Russell, a leading AI researcher at UC Berkeley and author of 'Human Compatible: AI and the Problem of Control,' has often warned about the fundamental challenge of aligning superintelligent AI with human values. He once noted, "The standard model of AI, which is to build systems that maximize expected utility, is exactly the wrong thing to do." His concern lies in the difficulty of fully specifying human objectives without unintended consequences. Meanwhile, Dr. Joy Buolamwini, founder of the Algorithmic Justice League, consistently highlights the critical need for diverse perspectives in AI development. She argues, "AI systems reflect the values and priorities of their creators. If the creators are homogenous, the systems will be biased." Her work underscores the importance of including voices from marginalized communities, including indigenous ones, in shaping these powerful technologies. Even within the industry, there's a healthy debate. A prominent venture capitalist, speaking on background, recently remarked, "The money pouring into Anthropic isn't just about belief in their tech, it's an insurance policy. Everyone wants a seat at the table if AGI truly emerges, and Anthropic's safety-first narrative is a powerful differentiator." This suggests that while safety is paramount, the underlying competitive drive remains fierce.

What You Can Do About It

Ignoring this digital tide is no longer an option. First, educate yourself. Follow reputable sources like MIT Technology Review and Wired to stay informed about AI developments. Second, demand transparency and accountability from AI developers and policymakers. Advocate for diverse representation in AI research and governance. Here in Hawaii, we have an opportunity to lead by example, by fostering discussions that integrate our cultural values into the global AI discourse. We must ensure that 'Aloha means more than hello because it's a framework for ethical AI', a guiding principle that can inform how these systems are designed and deployed. Support local initiatives that explore AI's potential in culturally sensitive ways, perhaps even influencing how AGI could assist in preserving endangered languages or managing our precious marine resources. Engage with your elected officials to ensure that future AI policies consider the unique needs and perspectives of our communities. Consider how AI might impact our local economy [blocked] and workforce.

The Bottom Line: Why This Will Matter in 5 Years

In five years, the abstract concept of AGI will likely be far less abstract. Anthropic's Claude, and similar models from competitors like OpenAI and Google DeepMind, will have permeated our lives in ways we can only begin to imagine. The 'Constitutional AI' approach, whether successful or not, will have set a precedent for how we attempt to control and align superintelligent systems. The ethical frameworks embedded in these early AGI models will profoundly shape the global digital landscape. If Anthropic's efforts bear fruit, we could see a future where AI genuinely assists humanity, guided by principles designed to prevent harm. If they falter, or if the 'constitution' is too narrow, we risk an AI future that is powerful but potentially misaligned with the broader human good, especially for cultures and communities not at the center of its development. The billions invested today are not just building algorithms, they are laying the groundwork for the very fabric of our future societies. We must ensure that this future, like a healthy ecosystem, is diverse, resilient, and serves the highest good for all.