The global discussion around Artificial General Intelligence, AGI, often feels like a high stakes poker game, with OpenAI, Google DeepMind, and Anthropic laying down ever larger bets. Each new model release, each incremental improvement in benchmark scores, fuels a narrative of an inevitable, perhaps imminent, breakthrough. Yet, beneath this clamor, a quieter, more fundamental shift is occurring in the pursuit of true intelligence, one that resonates deeply with Finland's pragmatic approach to innovation.

For years, the dominant paradigm for AI has been the Von Neumann architecture, where processing and memory are separate. This design, while foundational to modern computing, creates an inherent bottleneck, the 'Von Neumann bottleneck,' that becomes increasingly problematic for energy intensive deep learning models. Training a single large language model can consume energy equivalent to hundreds of homes for a year, a cost that is simply not sustainable as models scale. This is where neuromorphic computing enters the picture, offering a radically different blueprint inspired by the human brain, where memory and processing are intimately intertwined.

The Breakthrough in Plain Language: Beyond the Von Neumann Bottleneck

Imagine a computer chip that does not just simulate a brain, but functions more like one. Instead of shuttling data between a CPU and RAM, neuromorphic chips integrate memory directly with processing units, mimicking the parallel and event driven nature of biological neurons and synapses. This allows for vastly more efficient data handling and significantly lower power consumption, particularly for tasks involving pattern recognition, anomaly detection, and continuous learning. It is not about brute force computation, but about elegant, efficient processing.

Recent advancements, particularly from institutions like IBM Research with their NorthPole chip and Intel with Loihi, demonstrate tangible progress. IBM's NorthPole, unveiled in late 2023, boasts an impressive 22 billion transistors and can perform inference tasks with significantly less energy than conventional GPUs. It achieves this by moving away from traditional clock based operations to an event driven model, where computations only occur when necessary, much like neurons firing in the brain. This is a crucial distinction, as it allows for sparsity and asynchronous processing, hallmarks of biological intelligence.

Why This Matters: A Sustainable Path to Intelligence

The implications are profound. If the current trajectory of AGI development relies solely on scaling up existing architectures, we face an energy crisis of unprecedented proportions. The sheer computational demands of future, more capable models would be unsustainable, both economically and environmentally. Neuromorphic computing offers a potential escape route. It promises to deliver sophisticated AI capabilities not by throwing more power at the problem, but by fundamentally rethinking how computation is performed.

Consider the operational costs for companies like Google and Microsoft, who are investing billions in AI infrastructure. Reducing the energy footprint of their models by orders of magnitude would translate into enormous savings and a more defensible long term strategy. As Dr. Dharmendra Modha, IBM Fellow and Chief Architect of NorthPole, stated, “We are building a new foundation for AI, one that is fundamentally more efficient and scalable than what exists today.” This sentiment underscores a shift from merely achieving performance to achieving sustainable performance.

The Technical Details: Spiking Neural Networks and In-Memory Computing

At the heart of neuromorphic computing are spiking neural networks, SNNs. Unlike traditional artificial neural networks, ANNs, which process continuous values, SNNs operate with discrete temporal events, or 'spikes,' much like biological neurons. These spikes are transmitted between neurons only when a certain threshold is reached, leading to sparse and event driven communication. This contrasts sharply with the dense, continuous computations of ANNs, which require constant data movement.

Key to the efficiency of neuromorphic chips is in memory computing. Instead of a separate memory bank, processing elements are integrated directly into the memory arrays. This eliminates the need to constantly move data between separate processing and memory units, drastically reducing energy consumption and latency. Intel's Loihi research platform, for example, integrates 131,072 neurons and 131 million synapses, all designed to process information locally and asynchronously. Researchers at Purdue University, in collaboration with Intel, have demonstrated how Loihi can be used for real time gesture recognition with remarkably low power, consuming only tens of milliwatts.

Who is Doing the Research: A Global Effort with Finnish Echoes

While American giants like IBM and Intel lead in hardware development, the research landscape is global. European initiatives, often less publicized but equally vital, are making significant contributions. The Human Brain Project, a large scale European research initiative, has been instrumental in advancing our understanding of brain simulation and neuromorphic engineering. Universities across Europe, including those in Finland, are actively exploring SNNs and their applications.

Here in Finland, our education system, known for its rigorous scientific grounding, has produced researchers contributing to this field. While we may not have the same scale of corporate investment as Silicon Valley, our focus on practical, robust engineering and sustainable solutions aligns well with the principles of neuromorphic design. As Professor Jari Nurmi from Tampere University, a leading figure in embedded systems, once remarked, “Finland's approach is quietly revolutionary. We do not chase every fleeting trend, but focus on foundational improvements that offer lasting value.” This patient, methodical approach is perfectly suited for the long term development required for neuromorphic systems.

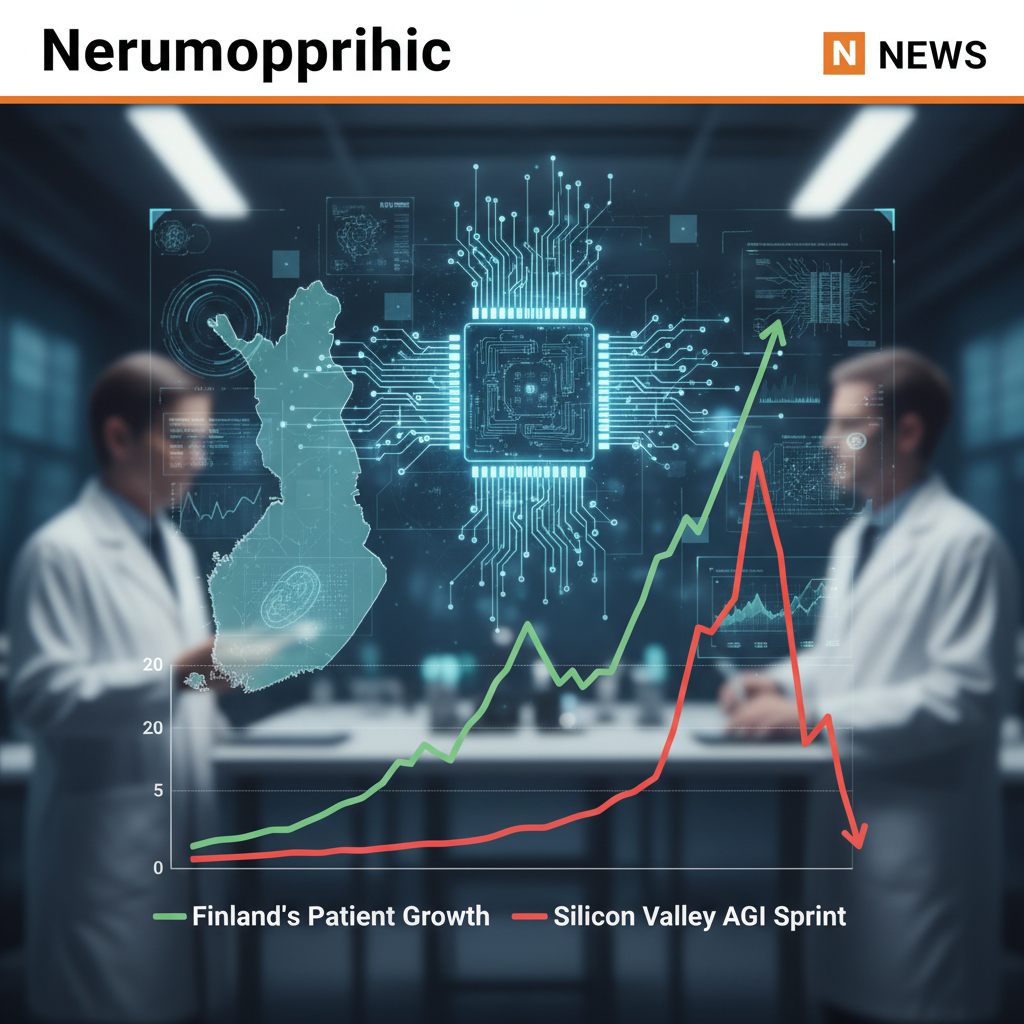

Implications and Next Steps: A Marathon, Not a Sprint

The immediate future of AGI will likely still be dominated by scaled up transformer models and conventional hardware. However, the long term trajectory, particularly for truly autonomous and energy constrained AI, points towards neuromorphic architectures. Imagine AI embedded in everything from smart sensors in Arctic conditions, requiring minimal power, to advanced robotics that learn and adapt in real time without constant cloud connectivity. The applications are vast and compelling.

For Finland, with its strong heritage in telecommunications, think Nokia taught us something about reinvention, and its commitment to sustainability, neuromorphic computing represents a strategic area of interest. Our expertise in low power electronics, embedded systems, and robust software development positions us well to integrate and innovate with these emerging technologies. The sauna principle of AI development, slow heat, lasting results, applies here perhaps more than anywhere else. It is not about who gets there first with the loudest bang, but who builds the most resilient and sustainable path forward.

While the path to widespread adoption of neuromorphic chips in general purpose computing is still years away, perhaps a decade or more, the progress is undeniable. The challenges include developing robust programming paradigms for SNNs, creating efficient compilers, and integrating these novel architectures into existing software ecosystems. However, the potential rewards, in terms of energy efficiency, real time learning, and true intelligence, are too significant to ignore. The race to AGI is not just about raw power, but about intelligent design, and in that race, neuromorphic computing offers a compelling, sustainable alternative. For more on the latest in AI research, consider visiting MIT Technology Review. For a broader perspective on the industry, TechCrunch offers frequent updates.