The digital frontier of national security is a landscape increasingly dominated by algorithms and artificial intelligence. At its heart, a company named Palantir Technologies has carved out a formidable niche, its name whispered in the corridors of power from Washington to Westminster. But what exactly do its platforms, Gotham and Foundry, represent for the future of statecraft, and how do these controversial government contracts resonate in a world increasingly wary of data supremacy? Is this a necessary evil, a technological imperative, or a dangerous new normal that could reshape the very definition of sovereignty?

To understand Palantir's current trajectory, one must first appreciate its origins. Founded in 2003 with early investment from the CIA's venture capital arm, In-Q-Tel, Palantir was built on the premise of assisting intelligence agencies with complex data analysis. Its initial product, Gotham, became a cornerstone for counter-terrorism efforts, allowing disparate datasets to be integrated and analyzed for patterns that human analysts might miss. Later, Foundry emerged, extending this analytical power to commercial enterprises and other government agencies, focusing on operational efficiency and supply chain optimization. The company's history is steeped in secrecy, its operations often shrouded in non-disclosure agreements, fueling both its mystique and its critics' concerns.

Today, Palantir's reach is extensive. It reportedly holds contracts with numerous US government entities, including the Department of Defense, the FBI, and ICE, as well as defense ministries in allied nations. Its platforms are deployed in diverse scenarios, from tracking disease outbreaks to managing military logistics. For instance, during the Covid-19 pandemic, Palantir's technology was utilized by several governments to manage vaccine distribution and track infection rates, a testament to its capabilities in crisis management. Yet, these deployments invariably spark debates about privacy, data ethics, and the potential for algorithmic bias. Critics argue that the opaque nature of Palantir's algorithms and the sensitive nature of the data they process create an accountability vacuum, enabling surveillance on an unprecedented scale.

My sources in the tech sector confirm that the company's annual revenue figures, while not always transparently broken down by segment, consistently show a significant portion derived from government contracts. In its latest earnings reports, Palantir has emphasized its growing commercial segment, yet the government sector remains its foundational bedrock, often seen as a strategic entry point for broader market penetration. The company's CEO, Alex Karp, has often articulated a vision of the West needing to leverage its technological superiority to maintain its geopolitical standing. This perspective, while perhaps pragmatic for some, raises red flags for others who fear the erosion of civil liberties.

Indeed, the implications of Palantir's pervasive government contracts extend far beyond the immediate operational benefits. They touch upon fundamental questions of state power and individual freedom. As Dr. Evgeny Morozov, a prominent critic of Silicon Valley's influence on governance, remarked, "The allure of efficiency often blinds us to the long-term societal costs of handing over critical state functions to private, opaque entities." His sentiment echoes through many European capitals, where concerns about data sovereignty and external technological dependence are particularly acute. The European Union, for example, has been actively pursuing its own digital sovereignty initiatives, seeking to reduce reliance on non-EU tech giants, a policy that directly impacts companies like Palantir.

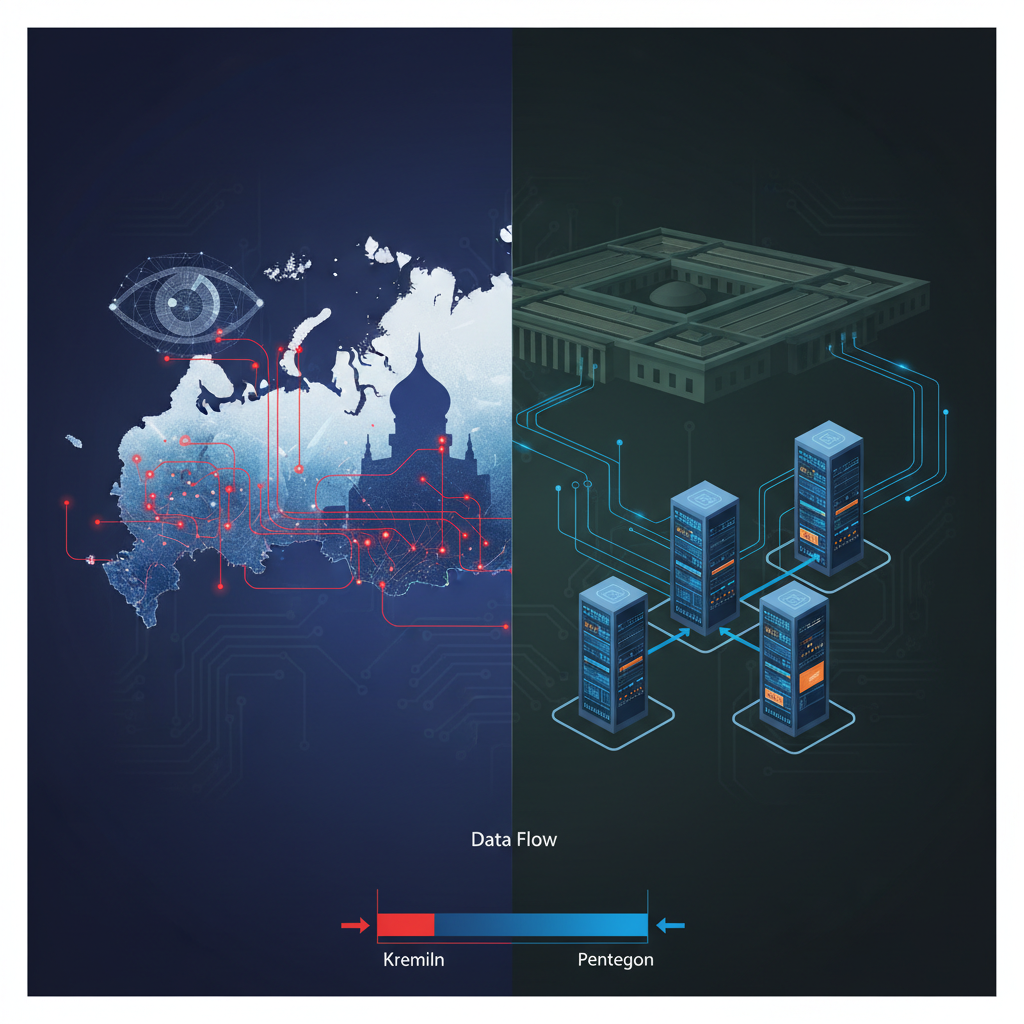

Consider the Russian context. While direct contracts between Palantir and the Russian government are, for obvious reasons, non-existent, the trend of integrating advanced AI for state functions is keenly observed. The Kremlin's digital strategy reveals a clear ambition to develop domestic AI capabilities for defense, intelligence, and public administration. Russian companies like Yandex, for instance, are at the forefront of developing sophisticated AI systems, from search algorithms to autonomous vehicles. The challenge for Moscow, however, lies in balancing technological advancement with the impact of international sanctions, which restrict access to advanced hardware and software components. This creates a complex environment where the West's reliance on companies like Palantir serves as both a cautionary tale and a benchmark for indigenous development.

"The ability to process vast quantities of unstructured data and derive actionable intelligence is no longer a luxury, but a necessity for any modern state," observed Professor Andrei Soldatov, a Russian investigative journalist and expert on security services, during a recent online seminar. "The question is not if, but how, this capability is acquired and, crucially, how it is governed." This perspective highlights the universal appeal of Palantir's core offering, even as its methods remain contentious. The company's platforms promise to turn chaos into order, a seductive proposition for any government grappling with complex global challenges.

However, the very power of these platforms necessitates rigorous oversight. The potential for misuse, for example, in targeting specific populations or stifling dissent, is a constant concern. The lack of independent audits for Palantir's government deployments, a common criticism, further exacerbates these fears. As one former intelligence official, speaking anonymously due to ongoing non-disclosure agreements, confided, "The algorithms are only as ethical as the people who design them and the policies that govern their use. The black box nature of some of these systems makes true accountability incredibly difficult." This sentiment resonates deeply with the Russian experience, where state control over information and technology is a well-established norm, and the potential for AI to augment such control is not lost on observers.

So, is Palantir's model of pervasive government contracts a fad or the new normal? Given the accelerating pace of global geopolitical shifts and the increasing complexity of national security threats, it is difficult to imagine a retreat from data-driven intelligence. The demand for sophisticated analytical tools will only grow. However, the future will likely see increased scrutiny and demands for transparency. Governments, particularly in democratic nations, will face mounting pressure from civil society and privacy advocates to establish clearer ethical guidelines and oversight mechanisms for AI deployments. The European Union's comprehensive AI Act is a prime example of this emerging regulatory landscape, seeking to impose strict rules on high-risk AI systems, a category into which Palantir's platforms would undoubtedly fall.

Ultimately, Palantir's journey reflects a broader societal reckoning with the power of artificial intelligence. Its controversial government contracts are not merely business transactions, they are a reflection of a fundamental shift in how states perceive and wield power in the digital age. While the allure of its capabilities is undeniable, the path forward must be paved with transparency, accountability, and a steadfast commitment to democratic values, lest the tools designed to protect us become instruments of an unseen, algorithmic control. The conversation around Palantir is not just about technology, it is about the very soul of governance in the 21st century, a debate that resonates from the Pentagon's secure facilities to the bustling tech hubs of Moscow, and indeed, every corner of our increasingly interconnected world. For further insights into the global AI landscape, one might consult TechCrunch's AI section or MIT Technology Review for deeper analysis on these evolving trends.