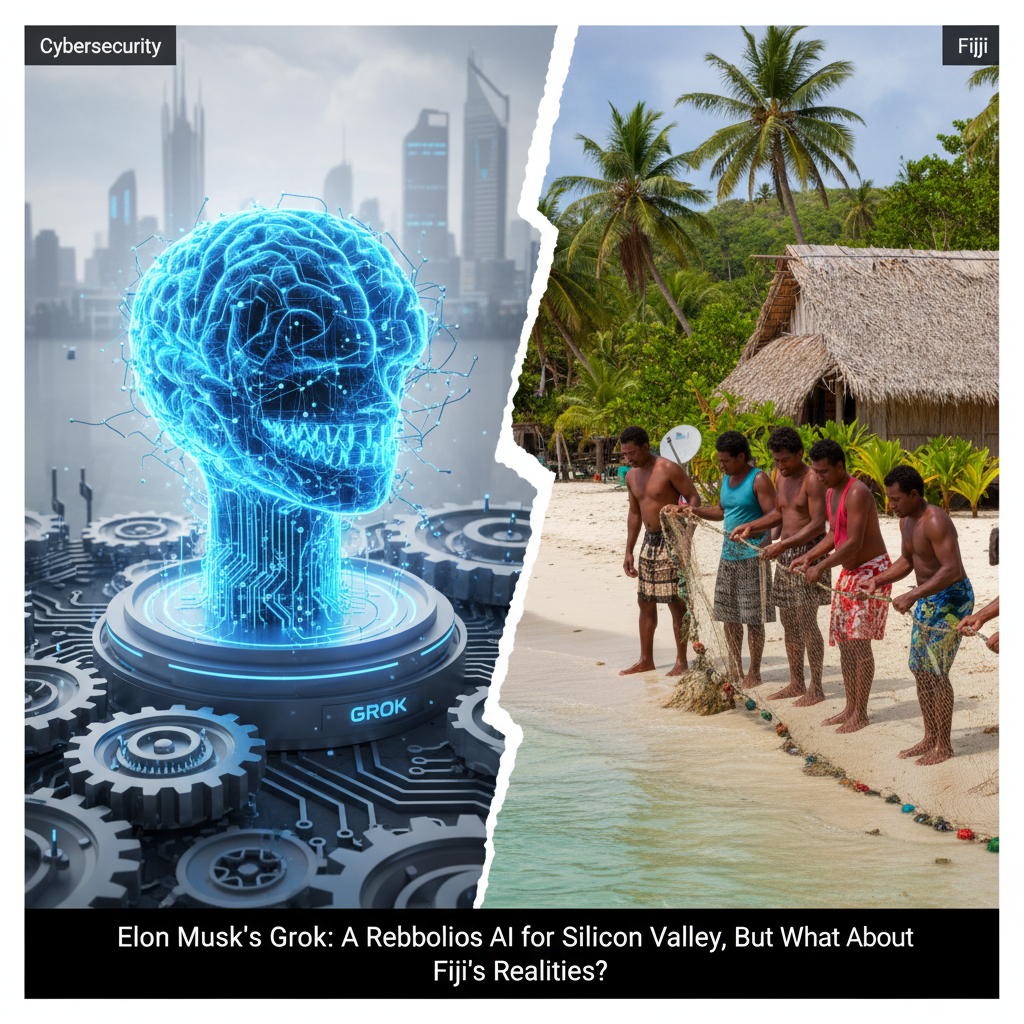

The tech world, as usual, is buzzing. This time, the noise is mostly about Elon Musk's xAI and its flagship large language model, Grok. The narrative is clear: Grok is the challenger, the disruptor, the 'rebellious' alternative to OpenAI's ChatGPT, designed to answer questions with a bit more edge and access to real-time information from X, formerly Twitter. In Silicon Valley, this might be seen as a groundbreaking philosophical shift in AI development, a move towards unfiltered truth, or at least, unfiltered opinion. But here in Fiji, we face the future with clear eyes, and the question isn't about which AI has the most attitude, it's about which AI can help us keep our heads above water, literally and figuratively.

Musk's vision for Grok is rooted in a desire for an AI that is less constrained by what he perceives as 'woke' biases or overly cautious guardrails. He argues that an AI should be able to tackle controversial topics and provide a wider range of perspectives, even if those perspectives are sometimes unpalatable. This approach has certainly generated headlines and drawn a distinct line in the sand against OpenAI's more safety-first, alignment-focused development. The data shows a clear divergence in philosophy. While OpenAI has invested heavily in alignment research, publishing numerous papers on safety and ethical AI, xAI's public statements often emphasize speed and a less filtered output. According to a recent analysis by TechCrunch, xAI's development cycle for Grok has been remarkably swift, leveraging Musk's existing infrastructure and data streams.

For us in the Pacific, the debate over AI's 'personality' or its willingness to engage in 'controversial' discourse feels a bit distant, almost luxurious. Our challenges are fundamental: rising sea levels, increasingly severe cyclones, food security, and ensuring our communities are connected and resilient. When I look at AI, I don't see a tool for generating sarcastic tweets or debating abstract philosophical points. I see a potential lifeline, a means to predict weather patterns with greater accuracy, to optimize resource allocation during a disaster, or to bridge the digital divide in remote island communities.

Consider the practical applications. We've seen promising pilot programs, like the one run by the University of the South Pacific's climate research unit, which used AI to analyze historical weather data and predict cyclone paths with 15% greater accuracy than traditional models. This isn't about a 'rebellious' AI, it's about a reliable one. "The accuracy of these predictions directly impacts our ability to evacuate communities and protect infrastructure," explained Dr. Alisi Vunivalu, a senior climate scientist at USP. "A 15% improvement might sound small to some, but it translates to saved lives and millions of dollars in avoided damages for us." This is the kind of data-driven impact we need.

Musk's approach, while intriguing for its boldness, raises questions about utility in contexts like ours. If Grok is designed to be more 'truth-seeking' by accessing real-time, unfiltered information from X, how does that translate to actionable intelligence for climate adaptation? The platform X, while a source of immediate information, is also notoriously rife with misinformation and unverified claims. An AI trained on such a firehose of data, without robust filtering and verification mechanisms, could inadvertently amplify harmful narratives or provide inaccurate information during a crisis. For a nation where accurate, timely information can be the difference between safety and disaster, this is a serious concern.

"The Pacific way of problem-solving involves collaboration, consensus, and a deep understanding of our environment," says Ratu Semi Koro, Director of Fiji's National Disaster Management Office. "We need AI that augments these values, not one that introduces more noise or uncertainty. Our systems must be trustworthy, especially when lives are on the line." He pointed to the need for AI models that can process local dialects, understand traditional ecological knowledge, and operate effectively even with limited internet connectivity, a common issue during and after natural disasters.

The global AI landscape is a dynamic one. Companies like Google, with its Gemini models, and Anthropic, with its Constitutional AI, are also pushing boundaries, but often with a more explicit focus on safety, alignment, and responsible deployment. Google's recent initiatives in developing lightweight, efficient AI models for edge devices, for instance, hold significant promise for regions with limited infrastructure. Imagine an AI model running locally on a solar-powered device in a remote Fijian village, providing agricultural advice or early warning for heavy rainfall, without needing constant high-bandwidth internet access. That's a game-changer.

Small island, big challenges, smart solutions. This isn't just a catchy phrase, it's our reality. The focus for us must remain on practical, deployable AI that addresses our most pressing issues. While the philosophical debates surrounding AI's 'personality' or its 'rebellious' streak might captivate the tech elite, our priority is survival and sustainable development. The data consistently shows that climate-vulnerable nations need tools that are robust, reliable, and tailored to specific local needs, not just general-purpose chatbots, however clever they might be.

There's a significant opportunity for AI developers to engage with communities like ours, to understand the unique constraints and opportunities. Instead of building AI in a vacuum, or solely for the needs of the developed world, a more inclusive approach could lead to truly transformative technologies. This would involve data collection that respects local customs, model training that incorporates diverse knowledge systems, and deployment strategies that account for infrastructure limitations. The potential for AI to aid in everything from marine conservation to sustainable tourism is immense, but it requires a grounded, practical perspective.

Ultimately, the success of any AI model, be it Grok, ChatGPT, or Gemini, will be measured not by its ability to generate witty retorts or shock value, but by its tangible impact on real-world problems. For Fiji and other Pacific Island nations, that impact is most keenly felt in our fight against climate change and our quest for sustainable development. The tech world would do well to remember that innovation isn't just about pushing boundaries; it's about solving problems that matter, especially for those on the front lines of global challenges. The future of AI, from our perspective, isn't about whose model is more 'rebellious', but whose model is truly resilient and relevant. For more insights into how AI is shaping global tech trends, you can always check out The Verge's AI section or Reuters' technology coverage.

While the internal link to an article about Canada's legal framework for AI malfunctions might seem distantly related to the core topic of Grok's philosophy, it touches on the broader theme of AI governance and societal impact, which is relevant to any AI deployment, including those in Fiji. The question of who pays the price when AI malfunctions is a universal one, regardless of the AI's philosophical bent. For a deeper dive into the governance challenges surrounding AI, especially concerning accountability and legal frameworks, you might find this article on AI malfunctions and legal frameworks [blocked] sheds more light on the complexities involved. It underscores that beyond the hype and the philosophical battles, there are very real, very practical questions about how these powerful tools are managed and regulated, questions that are just as vital for us in Fiji as they are for Canada.